Building a Website with Performance in Mind

The team at TGFI recently launched a new web site for the Big South Conference. Through our initial research process we identified three key initiatives:

- They had an incredible amount of content that needed to be well organized.

- It had to look good on a variety of devices.

- It had to be fast. Really fast.

The first two initiatives are easily addressed as part of our normal research and design processes. However, speed is a bit different for two reasons. First, most projects don’t have the traffic to warrant spending a lot of time on performance. While TGFI always works on database optimization (queries and indexes) as we go, we usually can’t spend extra time on caching, css and javascript performance. Second, most projects don’t really need it. They don’t have a lot of traffic and their server can easily handle it.

This project, however, was a bit different. We knew from early research that over half of the people using the site were on mobile devices – one of the highest percentages we’ve ever seen. They also have large spikes in their traffic when people rush to the site to watch football or basketball games, which compounds when multiple games are streaming simultaneously.

How We Addressed It

There are a multitude of ways to handle this process, but here are some of the key techniques we used to address performance.

- We used the Rails asset pipeline to provide one javascript and one css file. Every additional request for a mobile device is a big delay and a let down to visitors.

- We used fast content delivery networks (CDN) for video, images and files (pdf, Word, Excel, etc). These systems are optimized for lots of traffic and high bandwidth scenarios.

- We compressed images the best we could without them looking like crap. We also minified and compressed css and javascript for much smaller downloads.

- We used Varnish as a caching proxy between the visitor and the web servers. This stores css, javascript, images and in some cases full pages of content in memory for as long as we tell it to do so. When in use, visitors never touch the application or the database and it responds back to them in just a few milliseconds!

- We cached key pages, information and snippets (articles, menus, etc) in Memcached. We use these snippets so we only need to recreate something when it changes.

- We hosted it ourselves so that we have control over everything we can during a visit.

OK, But How Well Does This Really Work?

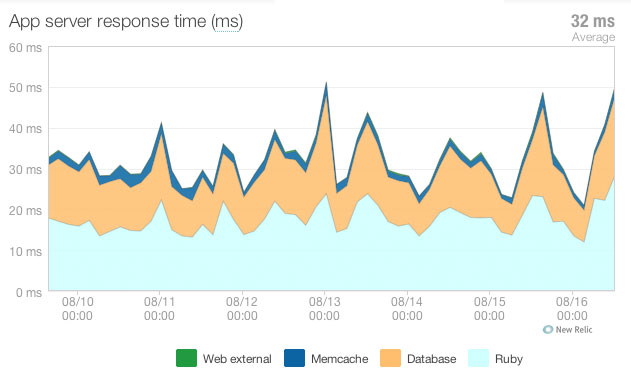

Extremely well. Here is our performance graph from the last week showing an average response time of just 32 milliseconds. If I had to guess, the average across most of our applications is somewhere between 750ms and 1 second. That’s a big difference.

I should be honest here though – that’s a misleading graph. It’s only showing the requests that make it to the Ruby on Rails application. If you take Varnish caching and our web servers into account, our average response time is under ten milliseconds. Even under heavier spikes of traffic, there has been no perceptible change.

For all those naysayers out there, you are wrong – Rails can scale. You just have to know what you’re doing.